Berkshire's LLM Is Not What You Think

Sign up for ARPU: Stay informed with our newsletter.

Programming note: ARPU will return next Tuesday with a look at the AI supply crunch.

AI Trading Bots

If you want to know whether AI is actually ready to take over Wall Street, the easiest way to find out is to give it a brokerage account.

For the last two years, the technology industry has promised that artificial super-intelligence is just around the corner. If a large language model can pass the bar exam, write Python code, and compose a sonnet, it should presumably be able to do the most lucrative job on Wall Street: beating the S&P 500.

But as it turns out, reading the market and trading the market are two entirely different skills.

A recent Bloomberg report highlighted a series of public AI trading contests designed to test exactly this premise. A tech startup pitted the world's most advanced frontier models—including OpenAI's ChatGPT, Anthropic's Claude, Google's Gemini, and Elon Musk's Grok—against each other in live markets. Each model was handed $10,000 and two weeks to trade U.S. tech stocks.

If AI is coming for Wall Street, it did not show up prepared.

Across 32 different contest runs, an AI model finished in profit exactly six times. As a whole, the aggregated AI portfolio managed to lose about a third of its capital. The models traded too much, mistimed their entries, and sized their risks terribly. Given identical instructions, they behaved erratically: Claude stubbornly wanted to go long, Gemini had no problem shorting, and Alibaba's Qwen decided the best course of action was to trade 1,418 times in two weeks.

The bots did not lack confidence. They lacked edge.

There is also a structural reason these contests cannot simply be fixed by running them backward through history. A model asked in 2026 how it would have traded in March 2020 already knows what March 2020 looked like. That contamination problem—known as lookahead bias—means AI trading systems can only be meaningfully tested in live markets, where the future is genuinely unknown. In live markets, as it turns out, they mostly lose money.

What Omaha Said

There is a certain type of old-school financial executive who has been quietly saying this for months.

At the Berkshire Hathaway annual meeting, Ajit Jain—the man who oversees Berkshire's massive insurance operations—was asked where human judgment still holds a competitive advantage over AI. His answer drew a sharp line between what the technology is doing today, and what it is pretending to do.

He described AI as a phenomenal productivity tool that handles routine, repetitive work perfectly. But for the harder judgment calls—pricing an insurance premium, settling a complex claim, or picking a stock—he was notably skeptical:

I do not think AI will reach a point where you can make a tradeoff on things like pricing or settling a claim. That is still years away and, you know, I tend to be skeptical. I'll be surprised if AI can solve that problem for you. So, if you're counting on AI telling you which stock to buy and which one to sell, I don't think that's going to happen

It sounded like classic Midwestern skepticism. But thanks to the AI trading contest, we know it is an empirical fact.

Ken Griffin, the founder of the hedge fund Citadel, made the exact same point at Davos earlier this year. He noted that while AI is driving a massive digitization boom and re-empowering corporate CTOs, it has not replaced meaningful research or produced durable market-beating returns at Citadel. If generative AI were actually generating alpha, Citadel would be the first place you would see it. They are not using it for that.

Two of the most respected minds in finance. The same conclusion.

The Automation of the Obvious

Part of the disappointment with AI in trading comes from a mismatch between what a language model actually does well and what beating the market actually requires.

An LLM is essentially the world's fastest Sunday-night intern. It can read a 10-K, summarize an earnings transcript, compare two balance sheets, and produce a grammatically flawless investment memo in thirty seconds.

But alpha is not a polished memo. Alpha is seeing something the market has not priced correctly. It means knowing which fact matters, how much it matters, when to bet, and when to get out.

There is a subtler problem, too. LLMs are trained on the internet. They consume the same public financial filings, the same analyst reports, and the same broad corpus of human commentary. When you ask them for an answer, they tend to converge on the median opinion of every financial blogger on earth. Their output is cautious, diversified, plausible, and entirely undifferentiated.

That is not alpha. That is the automation of the obvious. And in finance, the obvious is already priced in.

The Large Logic Model

So where does AI actually belong in the corporate world? Not in the glamorous parts.

At the Berkshire annual meeting, CEO Greg Abel offered a less glamorous—but perhaps more honest—way to think about the technology:

Those large language models, I really communicate them to our teams as 'large logic models.' We're at this point in time using it to solve logical challenges in our business and what we're trying to do is do it in a more efficient fashion, i.e. do it more quickly and get to a better result

Berkshire Hathaway doesn't care if a computer can write a poem. They care if it can solve a logic puzzle. Abel walked through how Berkshire is using AI to manage BNSF, its massive railroad business. BNSF has more than 750 freight trains crossing a 177-year-old rail network every single day, navigating weather disruptions, equipment failures, and Amtrak scheduling conflicts.

That is not a qualitative judgment call. That is an extraordinarily complex, rules-based logistics problem. And that is exactly where AI excels: structured, repetitive optimization.

This reframe is useful. It defines the category of problem AI is actually solving (logical, optimizable) as opposed to the category it is failing at (judgment under genuine uncertainty). And it explains the paradox currently playing out across the entire financial industry. According to a 2026 Cambridge Judge Business School report, while 81% of financial services firms are already using AI at some level, more than half of them admitted that measuring the value of that deployment was difficult.

This is the "Large Logic Model" in practice: AI is increasingly being adopted for the boring, back-office work—fraud detection, compliance monitoring, code generation—but the glamorous ROI remains stubbornly elusive.

The tech industry is expected to spend close to a trillion dollars on data centers this year. To justify that kind of AI capex, you have to sell a narrative that you are building an omniscient digital brain that will replace the human workforce. But the reality is much more boring. AI is not going to replace your portfolio manager. But it is going to make the freight trains run on time.

📊 Data > Narrative

We pull key data points to show you the mathematical reality of what's happening in tech.

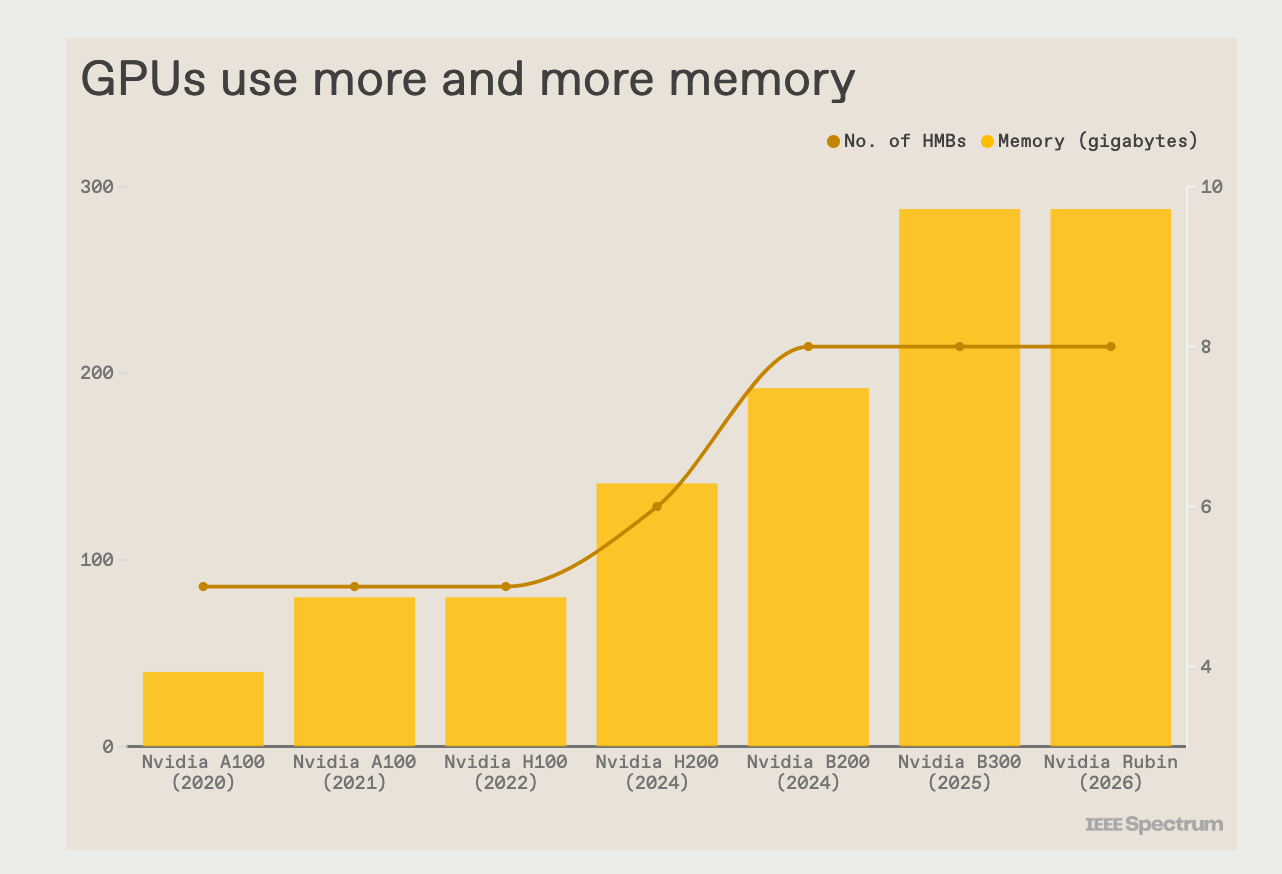

The Data: The chart tracks Nvidia's GPU generations from the A100 in 2020 to the Rubin chip in 2026 across two measures: total memory capacity and the number of HBM stacks per chip. Memory per chip has grown from 40GB on the original A100 to 288GB on the Rubin—a 7x increase in six years. The number of HBM stacks also grew over the same period, reflecting both the growing memory appetite of AI workloads and the physical engineering required to meet it.

The Takeaway: Every successive GPU generation is not just faster—it is thirstier. That escalating demand for HBM is the physical substrate behind what Bloomberg calls a deepening memory crunch: memory pricing was mentioned more than 550 times in company earnings calls this year, already more than any full year on record since 1999. Memory makers like Micron and Samsung are posting record results. The companies buying their chips are paying for it.

You received this message because you are subscribed to ARPU newsletter. If a friend forwarded you this message, sign up here to get it in your inbox.