The Wrong Nvidia Moat

Sign up for ARPU: Stay informed with our newsletter.

Programming note: we will return next Friday with some thoughts on Oracle.

Electrons In, Tokens Out

In a podcast interview this week, Jensen Huang described his company in terms somewhere between engineering and philosophy: "The input is electrons, the output is tokens, and in the middle is Nvidia." It is also, conveniently, a description in which Nvidia sits in the only position that matters.

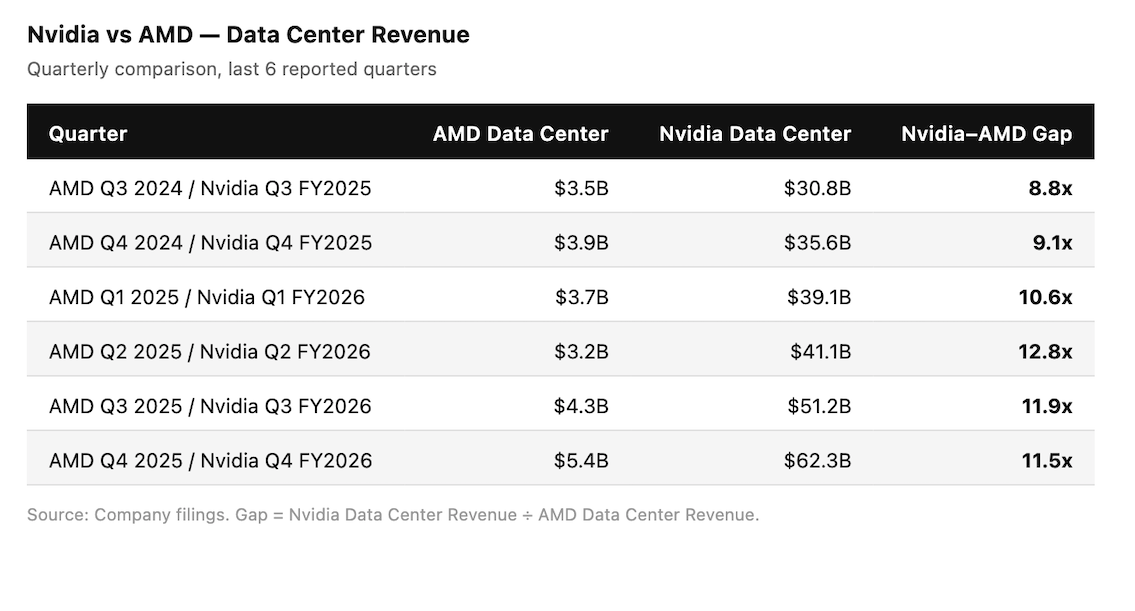

The awkward thing is that this is barely a metaphor and, for now, an increasingly literal business description. Over the last six quarters, Nvidia's data centre revenue grew from $30.8 billion to $62.3 billion. AMD's—still the most credible hardware challenger—grew from $3.5 billion to $5.4 billion. The gap did not close. It widened, from under 9x to over 11x (see table in Data>Narrative section below). At this pace, "credible challenger" starts to sound less like a competitive assessment and more like a courtesy title.

From the outside, Nvidia's dominance looks like product leadership. From the inside, it looks more like a tollbooth attached to the power grid. Every time an AI model generates a chat response, writes a line of code, or hallucinates a legal citation, something has to turn raw electricity into that output. Nvidia built the machine that does the turning. Then it built the software that runs the machine. Then it built the network that ties the machines together. Then, more importantly, it made sure a large part of the industry would have a hard time building enough alternatives quickly.

The market's favorite explanation for what enabled Nvidia's dominance is CUDA. But that is probably too simple.

The CUDA Myth

The standard story goes something like this: the company's moat is CUDA, its software platform; developers learned it, built on it, and now nobody can leave. Huang's version is that CUDA's value is not really the code. It is the install base:

If you're a developer building anything at all, the single most important thing you want is an install base. You want the software you write to run on a whole bunch of other computers. You're not building software just for yourself.

We have several hundred million GPUs out there now. Every cloud has it...We're literally everywhere. The install base means that once you develop the software or the model, it's going to be useful everywhere.

There is a lot of truth in that. CUDA is free, mature, widely supported, and deeply embedded in the muscle memory of a generation of engineers. For startups, enterprises, and ordinary developers who want tools that work now and run almost everywhere, that matters a great deal.

But the more interesting question is whether it matters most to the customers who actually drive Nvidia's economics.

That answer is less obvious than Nvidia would like it to be.

The sophisticated exceptions are not hard to find. OpenAI built Triton, a Python-like alternative that gives its engineers tighter control over how workloads run on the hardware. DeepSeek famously bypassed standard CUDA in parts of its model, dropping down to PTX–a lower-level language–to squeeze out performance that CUDA's abstractions could not reach. Google has long relied on TPUs and TensorFlow. Anthropic has leaned heavily on Amazon's custom silicon. Meta has pushed deeper into custom chips through Broadcom.

These are not fringe users tinkering at the edge of the market. These are some of the largest, best-funded, and most important buyers in AI. That matters because roughly 60% of Nvidia's revenue comes from five hyperscalers. The customers writing the biggest checks are also the ones least trapped by CUDA in the way the consensus story assumes. They have the engineering talent, the scale, and the financial incentive to route around it.

CUDA still matters. It just matters most to the long tail of the market: startups, enterprises, researchers, and everyone else who cannot afford armies of kernel engineers. For them, Nvidia's software stack remains a huge advantage. Writing in something else is an option in the same way learning a third language is an option: technically possible, professionally inconvenient, and therefore mostly theoretical.

But the long tail is not the same thing as the center of gravity. If the largest customers can and increasingly do live outside the CUDA universe, then CUDA cannot be the core explanation for Nvidia's economics. It may be a layer of stickiness. It is probably not the moat people imagine.

What Nvidia Actually Sells

If CUDA is not the whole story, what is?

The answer is not as elegant, but it is probably more important: Nvidia sells the fastest deployable path to large-scale AI capacity.

That includes the chips, of course. But it also includes the interconnect, the system design, the software, the support, the roadmap, the manufacturing commitments, and the convenience of buying a working answer now instead of a theoretically better answer later. Nvidia is not just selling semiconductors. It is selling a way to turn capex into usable compute without forcing the customer to invent half the stack themselves.

This is where NVLink matters. At large AI scale, the bottleneck is increasingly not the speed of any one chip, but the speed at which thousands of chips can talk to each other. Nvidia has inserted itself into much of that conversation. Above that sits the systems layer: complete racks, networking, integrated AI "factories," and now Vera CPUs for orchestrating the whole operation.

That is a more useful way to think about the company. The real product is not CUDA plus GPUs. The real product is a full system that works now, at scale, in a market where everyone is in a hurry.

Time to deployment is its own moat.

A hyperscaler can, in theory, design a better chip for a particular workload. In some cases, Google already has. But designing a custom accelerator takes years. Manufacturing it takes more time. Standing it up at scale takes more still. And by the time the whole thing is in production, Nvidia is already on the next roadmap slide.

The Blackwell-to-Vera-Rubin-to-Feynman cadence—one major architecture per year, reliably, without exception—is not just an engineering achievement. It is also a very effective piece of theater, because it makes every competing roadmap look less like a plan and more like an attempt to catch up.

In a land grab, the best product is often the one that is already assembled.

Funding the Exit

This is what makes Nvidia's customer base so strange.

Its biggest customers are also the ones most motivated to escape.

Huang describes the current arrangement as a flywheel. Because Nvidia is everywhere, startups build on Nvidia. Because startups build on Nvidia, hyperscalers have to stock Nvidia to serve them. Because hyperscalers stock Nvidia at scale, suppliers commit more capacity to Nvidia's roadmap. That flywheel is real, at least while customers keep funding it.

A less flattering description is that five companies are spending tens of billions of dollars a year to reinforce the dominance of a supplier they are simultaneously trying to escape—and, quarter after quarter, mostly succeeding in making the dependency larger and more expensive.

This is not loyalty. It is transitional dependence.

Google has TPUs. Amazon has Trainium and Inferentia. Meta has extended its custom ASICs partnership with Broadcom through 2029. Broadcom's own latest quarter makes the point more clearly than any strategist could: AI-related revenue rose 106% year over year to $8.4 billion in fiscal Q1 2026, driven by strong demand for custom AI accelerators and Ethernet switching. That is the outline of an alternative market structure.

That point is worth qualifying, though, because "custom" is not the same thing as "cheap." Broadcom's Semiconductor Solutions segment posted gross margins of roughly 68% in FY2025, which suggests these chips are not exactly being handed out at Costco prices. The risk to Nvidia, then, is not that AI compute suddenly becomes a low-margin commodity. It is that customers may decide they no longer need to pay Nvidia’s universal premium for every workload, so long as a custom chip is good enough and available at scale.

The Reservation System

There is another reason Nvidia has been so hard to dislodge: it booked tomorrow's bottlenecks yesterday.

If you want to beat Nvidia today, you do not just need a better chip design. You need a time machine to go back three years and outbid it for packaging capacity at TSMC.

Building a frontier AI chip requires not just design talent but access to a narrow set of constrained inputs: leading-edge wafers, advanced packaging like CoWoS, and high-bandwidth memory from a small handful of suppliers. None of those expands overnight. All require suppliers to commit capital well before demand is fully realized. As of early 2026, Nvidia had disclosed roughly $100 billion of manufacturing, supply, and capacity commitments.

That is a real advantage. It gives Nvidia's roadmap a credibility that most rivals cannot yet match. It makes the company's future product cadence sound less like aspiration and more like procurement.

But reservation is not the same thing as destiny.

Supply-chain lockup is powerful because it delays competition. It buys time. It makes Nvidia the easiest answer in the near term. What it does not do is settle the longer-term question of how much of the AI market remains worth serving with Nvidia's full-stack premium once alternatives improve.

The Monetization Problem

The deepest uncertainty in the Nvidia story is not demand. Demand is obvious.

Hyperscalers and frontier labs are spending as if compute is the scarce input and underbuilding would be the cardinal sin. That may be right. But it is also possible that a meaningful share of the current spending boom reflects urgency, fear, and competitive positioning more than settled economics. It is one thing to buy the fastest deployable system in the middle of a land grab. It is another to keep paying premium prices once finance teams start asking what the tokens are actually worth.

That distinction matters because Nvidia's present advantage is being financed by extraordinary demand. The durability of that advantage may depend on whether extraordinary demand turns into extraordinary profits for the customers buying all this hardware. If monetization remains murky, buyers become more price-sensitive. And a more price-sensitive market is exactly where custom accelerators, workload-specific silicon, and cheaper compute tiers become more dangerous to Nvidia's margins.

One reason to be cautious is that more AI demand does not automatically mean more demand for Nvidia's most expensive chips. The bullish leap is from "agents are coming" to "therefore everyone will need more frontier GPUs." OpenClaw suggests that leap may be too neat.

When OpenClaw triggered its recent mini agentic craze, people did not respond by buying Nvidia DGX stations. They bought Mac minis. They bought memory-heavy MacBooks. In China, even secondhand Apple laptops reportedly saw prices rise. That is a very specific kind of signal. It suggests that, for many agentic use cases, people are perfectly willing to accept hardware that is not especially fast, not especially glamorous, and certainly not optimized for peak token throughput—provided it is private, local, affordable, and good enough.

That does not break the Nvidia story. But it does complicate it. The agentic future, if it arrives, may not belong exclusively to whoever owns the fastest datacenter chips. A lot of it may belong to whoever offers the best return on inference, the cheapest persistent compute, or the most convenient local machine. Training frontier models and serving the most demanding inference workloads will still require enormous datacenter-scale compute. But a broad commercial agent market may care less about benchmark supremacy than about whether the task gets done cheaply, privately, and without too much waiting.

That is the part the Nvidia bull case often glides past. A world full of agents is not necessarily a world full of premium tokens. It may also be a world full of "good enough" economics. And that is a very different market structure from the one implied by the idea that all meaningful AI demand inevitably flows toward the highest-end infrastructure.

In other words, Nvidia may be winning today because the AI industry is in a hurry. The more uncomfortable question is what happens when the industry starts doing math.

How the Moat Might Leak

That is the real bear case for Nvidia.

Not collapse. Not some cinematic moment in which a rival unveils a better GPU and the whole market suddenly defects. The more plausible risk is negotiated erosion. If the AI industry becomes more disciplined about return on investment, then Nvidia does not need to lose its lead to lose some of its leverage. It only needs customers to become more selective about when the full Nvidia premium is actually worth paying.

That is already the direction of travel. The hyperscalers are not trying to replace Nvidia next year. They are trying to reduce the dependency over a decade, one workload at a time, starting with the ones where custom silicon already works well enough. Inference is the obvious target: it requires less flexibility than training, runs the same operations repeatedly, and represents a growing share of total compute spend as AI moves from development into deployment. A TPU or Trainium chip that handles AI workloads 30-40% cheaper is not a mortal threat in year one. It is a renegotiated contract in year five.

The key point is that Nvidia does not have to be displaced for its economics to change. It is enough for the market to segment. Frontier training can remain on Nvidia. Premium inference can remain on Nvidia. But if a large and growing share of agentic, enterprise, or background workloads drifts toward custom silicon, local devices, or cheaper "good enough" infrastructure, then the market starts to look less like a universal tollbooth and more like a series of negotiated lanes.

Margins are informative. Nvidia's non-GAAP gross margin dipped from 75.5% to 71.3% in FY2026, partly because of product transition costs and partly because export controls turned the H20 into a $4.5 billion inventory headache. Nvidia expects margins to recover into the mid-70s later this year, and it may well be right. So this is not proof that customers have suddenly stopped paying for the integrated answer. But it is a reminder that Nvidia's current economics still depend on keeping that premium intact. The more the market shifts toward cost-optimized alternatives and workload-specific hardware, the harder it becomes to assume those margins are simply the natural order of things.

Then there is geopolitics. Nvidia's position—naturally—is that selling chips globally, including to China, helps keep the American tech stack dominant because developers everywhere build on its ecosystem. The opposing argument is that any marginal compute sold abroad also accelerates capabilities that may eventually be used against American interests. Neither view is ridiculous. But the fact that Nvidia's business model depends so heavily on winning that argument in Washington tells you something important: this is not a self-contained market story. It is an industrial story sitting inside a geopolitical one.

That matters because geopolitics can do what competitors sometimes cannot. A regulatory decision in Washington created a multi-billion-dollar inventory problem almost overnight. Even the toll collector does not control the road.

So the moat may not leak all at once. More likely, it leaks in stages: first through inference, then through custom accelerators, then through local and lower-cost deployments, and all the while through politics that can distort product lines and redirect demand. Nvidia can remain the central company in AI infrastructure and still find that the market around it has become less obedient, less uniform, and less willing to pay peak Nvidia prices for every task.

That is what negotiated erosion looks like. Not the end of the moat. Just the end of the idea that every road has to run through it forever.

Best Current Answer

The electrons keep arriving. The tokens keep leaving. And somewhere in the middle sits a company that spent two decades making graphics cards for teenagers and accidentally became the infrastructure layer for the most important industrial buildout in technology.

That position is real. But the explanation for it is probably less flattering to Nvidia's mythology than the market often assumes.

The company is not winning simply because nobody can imagine a world without CUDA. It is winning because, in a market starved for time and capacity, nobody else can yet offer as much working AI infrastructure, at as much scale, fast enough.

That is a tremendous advantage. It is also, for now, the best current answer. But not necessarily the final structure of the industry.

Related reading:

- Google's TPU and the New Economics of AI Deployment (ARPU Thematic Report)

📊 Data > Narrative

We pull key data points to show you the mathematical reality of what's happening in tech.

The Widening Gap

- The Data: Over six quarters, AMD's data center revenue grew 54%, from $3.5 billion to $5.4 billion. Nvidia's grew 102%, from $30.8 billion to $62.3 billion. The Nvidia/AMD revenue multiple widened from 8.8x to a peak of 12.8x before settling at 11.5x in the most recent quarter.

- The Takeaway: AMD is growing, but Nvidia is scaling even faster from a vastly larger base. This is not what market share convergence usually looks like. Normally, the smaller rival grows quickly enough that the multiple begins to compress. Here, the opposite happened: the leader grew so fast that the revenue distance expanded even while the challenger improved.

You received this message because you are subscribed to ARPU newsletter. If a friend forwarded you this message, sign up here to get it in your inbox.